Developers are creative problem solvers by nature.

Few software development teams today are being asked to do less work. Backlogs continue to grow as applications become even more complex and/or business-critical.

Assuming those many user stories really are necessary, that leaves two basic choices: add more developers to the team, or find ways to get more done with the people you already have.

Option 2 is the go-to for most. After all, finding the right people with the right skills is a slow and expensive business, even if you have the luxury of extra headcount budget. That’s where modern development techniques like agile and DevOps come in, along with the huge range of automation tools that are available today.

But as you change the way you build applications, how can you judge what’s really making a difference, how big the impact might be and where there’s still room for improvement?

The DORA metrics have become a widely-used way to measure software development performance. Let’s take a look at what those metrics are and why they have been chosen.

What is DORA?

DevOps Research and Assessment (DORA) is the largest and longest study of its kind. Over recent years DORA researchers have analyzed feedback from thousands of development teams to understand the metrics that can drive high performance and how we can apply those metrics to enhance software development processes.

Google Cloud currently operates DORA, but it was in a collaboration between Puppet and DORA co-founders Dr. Nicole Forsgren, Gene Kim, and Jez Humble. This team developed a report from 2014 to 2017 to articulate valid and reliable ways to measure software delivery performance.

They then tied that performance to predictors which can drive business outcomes, and in 2018, published the book Accelerate to share their research with the world.

Armed with a scientific way to measure modern software development and the capabilities that impact it (or a more scientific and value-led approach than most, at least), the team have continued work on the study, publishing a new State of DevOps report every year.

What are the DORA metrics?

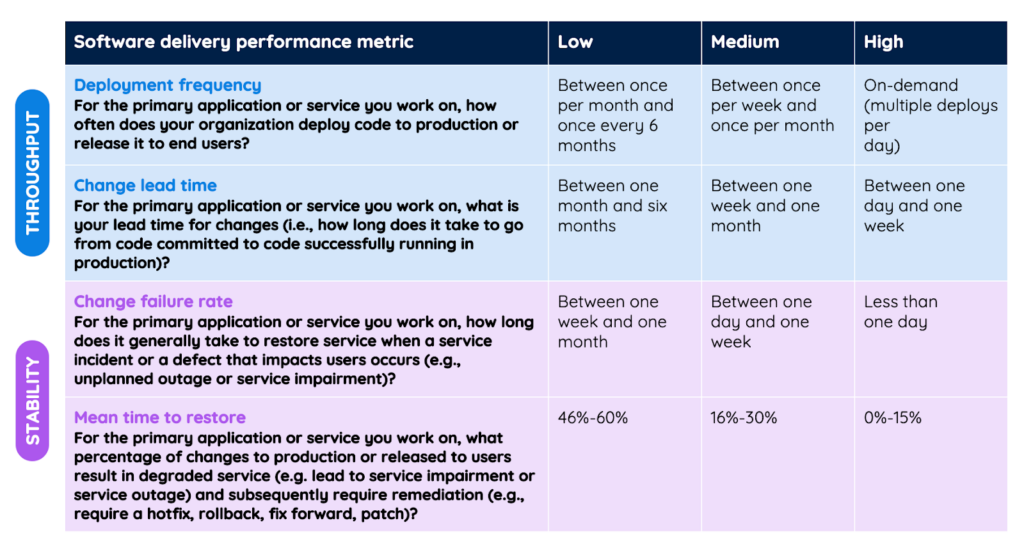

By 2021, DORA defined four key measures of software delivery performance. Deployment frequency and change lead time assess throughput. Mean time to restore and change failure rate judge stability. A fifth metric, reliability, is used to measure operational performance.

Deployment Frequency

This is the first place teams often start to consider performance improvements, not least because the ability to deploy on a frequent basis is a key enabler of faster software delivery. It’s also one of the easiest metrics to collect.

Deployment frequency looks at how many times development teams successfully deploy changes to production or release them to end users. The definition of success is variable and dependent on the system in question, but the crucial point is that this metric doesn’t just measure volume of change – deploys that always break something or only get rolled out to 3% of users might not count!

While it’s true that not every change makes a meaningful impact, deployment frequency provides a useful proxy for how quickly teams can deliver value to end users – not just how quickly they can make code changes.

In 2022 DORA defined high performance in this category as the ability to deploy on demand, multiple times per day.

Change Lead Time

As measured by DORA, change lead time analyzes the time it takes to get from commit to production shipment. This process can be viewed in phases to permit analysis of the most time-intensive areas. It’s effectively the flip-side of development frequency: not how often are you delivering value, but how long does it take you to do so each time? Change lead time is a useful reflection of how quickly you can respond to changing customer needs and unexpected events.

Note that the strict DORA definition of change lead time does not capture all the time spent by a developer working on code – only that time between the first commit and when the code is deployed. Commits provide a consistent and easily measurable way to collect data; in a mature agile or DevOps process they will happen early and often, but that may not be the case for all teams. In such cases the DORA metric could provide a somewhat less accurate picture of how long work really takes. (Conversely, the agile ‘cycle time’ metric tries to capture how long a task takes from start to finish, but often uses an earlier definition of ‘done’ than production deployment.)

In 2022 DORA defined high performance in this category as a lead time of between one day and one week.

Change Failure Rate

After looking at how quickly and how often you can deliver value, this metric considers how effectively you can do so. The DORA definition of change failure rate has evolved over time, in large part to reflect the fact that problems caused by code changes may not always be definitive enough for people to see them as a failure – even when they have significant negative impact. The 2022 DORA State of DevOps report defines this metric as the percentage of changes that result in degraded service and hence require remediation (e.g. hotfix, rollback, additional development).

Change failure rate can provide a window onto the amount of time spent by teams on rework rather than high-value new development. It can also be combined with other metrics to provide a view of the impact of change failures on customer satisfaction.

Teams that are new to agile/DevOps may fear that improving deployment frequency and change lead time will result in a higher change failure rate. In robust processes the opposite is true. Small deployments – however frequent – are typically better understood and carry less risk because they simply involve less change. And in cases where failures do occur because you’re moving quickly, you’re much more able to resolve them fast.

In 2022 DORA defined high performance in this category as a failure rate of between 0% and 15%.

Mean Time to Recovery

This metric focuses on how long it takes to diagnose, develop and deploy a fix when problems are detected. It’s essentially a specific subset of the deployment frequency and change lead time metrics, but is likely to differ from aggregate metrics because processes will usually be in place to expedite remediation of failures according to the severity of their impact.

MTTR is important to track because while slow deployment of change has an opportunity cost in terms of business value, change failures are likely to have an actively negative impact on business performance and customer satisfaction.

DORA have recently adjusted the name of this metric, changing it to ‘time to restore service’, but the basic question remains much the same: how long does it take to address a service incident or defect that impacts users?

In 2022 DORA defined high performance in this category as an average time to restore of less than one day.

Reliability

DORA introduced a fifth metric – reliability – in 2021. Study participants rated their ability to meet or exceed reliability targets. Factors such as availability, latency, performance, and scalability were considered. Reliability remains part of the DORA survey analysis, but without specific benchmark metrics.

A summary of the DORA State of DevOps 2022 metrics

The Impact of Unit Testing on DORA Metrics

Effective unit testing is a powerful way to improve software delivery performance as assessed by the DORA metrics. In fact, many people would argue that delivering changes more quickly, more often, with fewer issues simply isn’t possible with the early-warning regression-avoidance system good unit testing provides.

But writing and maintaining comprehensive unit test suites for large applications takes considerable time and effort (research suggests at least 20% of developer time is spent on writing unit tests) – time which doesn’t actually deliver the business value the DORA metrics are attempting to measure.

Diffblue Cover solves this problem for Java teams by using AI to write and maintain entire Java unit test suites completely autonomously. Cover operates at any scale, from method-level within your IDE to across an entire codebase as an integrated part of your automated Continuous Integration pipeline.

Learn more about DORA research and metrics at dora.dev, or get in touch with our team to learn more about autonomous Java unit test writing.