Suppose you have spent the day coding a new feature in your application. You now need to write unit tests for the code you’ve just added. What test cases should you write for your code? Do you write tests so all lines are covered? All branches? How do you ensure the tests you write adequately protect against regressions?

Read on: this blog will show you how to apply two techniques for producing test cases in your unit tests that will minimize regression risk.

Equivalence Partitioning (EP)

This first one is simple—in fact, you’ve probably used this technique without realizing it. The idea is that inputs to a method-under-test can be divided into equivalent sets, or “partitions”. All values in each partition are considered equivalent, and checking one input value in each partition is sufficient to indicate every value within that block has the same outcome.

For example, a fictional postal service may wish to classify items by weight so they can charge different amounts for postage:

| less than 500g | letter |

| less than 2kg | small parcel |

| 2kg and above | large parcel |

A simple implementation for this could be:

public class SortPostage {

public static Item classifyItemType(int itemWeight) {

if (itemWeight < 0) {

throw new IllegalArgumentException();

}

if (itemWeight < 500) {

return Item.LETTER;

} else if (itemWeight < 2000 ) {

return Item.SMALL_PARCEL;

} else {

return Item.LARGE_PARCEL;

}

}

}

enum Item {

LETTER, SMALL_PARCEL, LARGE_PARCEL;

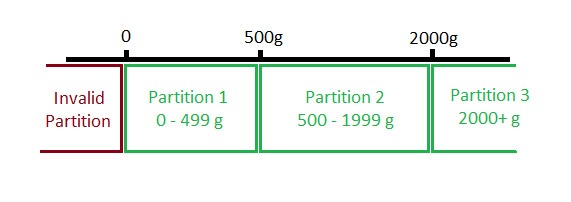

}The partitions in the above code would be:

The test cases to use here could be:

| Partition 1 | Partition 2 | Partition 3 |

|---|---|---|

| 55 | 660 | 4300 |

But it is equally valid to use:

| Partition 1 | Partition 2 | Partition 3 |

|---|---|---|

| 240 | 1800 | 2050 |

It’s often also useful to include a test case for the invalid partition to check the negative input response is correctly handled:

| Invalid Partition | Partition 1 | Partition 2 | Partition 3 |

|---|---|---|---|

| -10 | 55 | 660 | 4300 |

How can Diffblue Cover help?

With the above example, Diffblue Cover can automatically produce equivalence partition style tests for every partition above, including the invalid partition case, all by just clicking “Write Tests”!

Boundary Value Analysis (BVA)

The second technique—boundary value analysis—extends the equivalence partitioning approach by focusing testing inputs on the “edges” of the partitions. The idea for doing this originated from the observation that programming issues often cluster around the boundaries, or range limits, of input values.

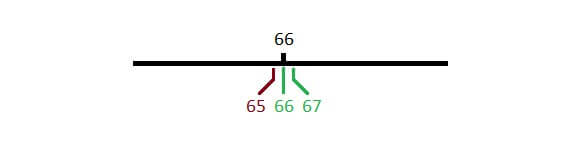

For example, a really simple method that checks if a person has reached UK state pension age (66) would be:

public static boolean isPensionAge(int age) {

return age >= 66;

}The boundary values to test this method (with outcomes) here would be the inputs 65 (false), 66 (true), and 67 (true).

BVA-style test cases monitor the desired behavior of the code much more closely compared with EP, which could use 42 (false) and 103 (true) as test cases for the two partitions. If there is, say, an undesirable change in pension age from 66 to 67, EP-style tests will not catch this change, but BVA-style tests will.

How can Diffblue Cover help?

Currently, Diffblue Cover cannot create BVA-style tests out-of-the-box. However, it is quite easy to modify the EP-style tests that Diffblue Cover can produce into the BVA-style tests based on the description above.

It’s worth noting that the number of BVA test cases will generally be significantly larger than EP tests, so you should judge when it is appropriate to use this style of testing as larger number of tests come with additional maintenance overhead.

If you’d like to try Diffblue Cover yourself, download our free Community Edition, an IntelliJ plugin that creates unit tests for open source Java projects.