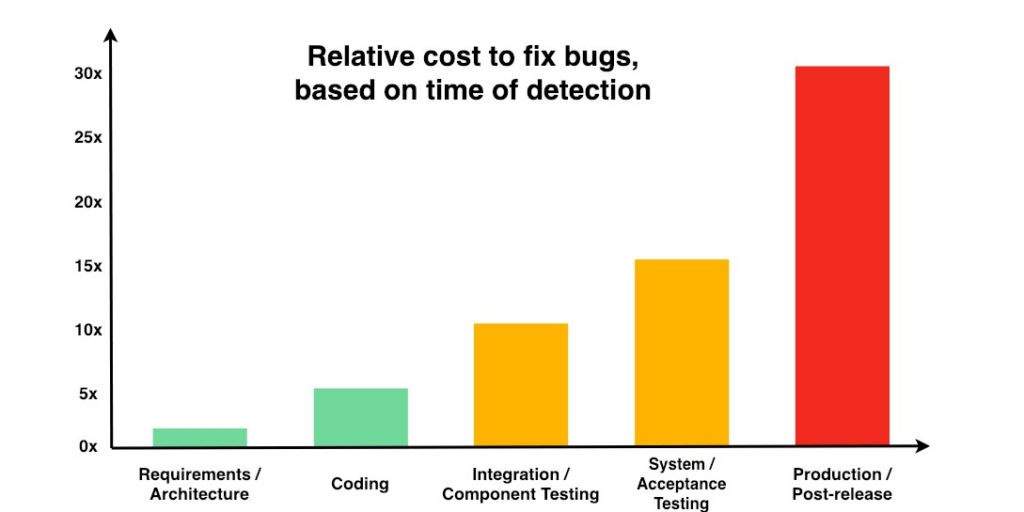

It’s widely acknowledged that the cost of fixing bugs increases drastically when they are identified in later stages of the SDLC. In fact, it’s 30 times more expensive to fix a bug that’s detected in production or post-release, compared to one found in the requirements/architecture stage. Because AI-generated unit regression tests are small and plentiful, they compile and run quickly to find precisely where the errors are very early in the pipeline, well before traditional regression tests run.

This makes it easier to find and fix mistakes when it is the cheapest and easiest to do so (before the developer has moved on to the next task) and also drives ownership and accountability for quality.

Image Source: The Exponential Cost of Fixing Bugs on DeepSource.io, with data from NISTAnother key advantage of AI-generated unit regression testing is the speed at which they can be created, and the subsequent time and cost savings. Though many organizations have code coverage goals of around 60-80%, quickly increasing existing code coverage by even 20% can allow developers to catch regressions that could otherwise have potentially huge impacts at later stages of the software development lifecycle. The cost of manually writing enough unit tests to increase coverage by this amount would be substantial, as demonstrated below.

Calculating savings of AI-generated unit regression tests

Findings from the 2019 Diffblue developer survey showed that the time spent writing unit tests costs companies an average of £14,287 per developer, per year. With an average of 45 developers employed at the companies included in the study, the typical unit testing cost for a mid-size company (with at least 500 employees) is approximately £643,000 per year, plus ad hoc maintenance to keep unit tests updated after they’re written.

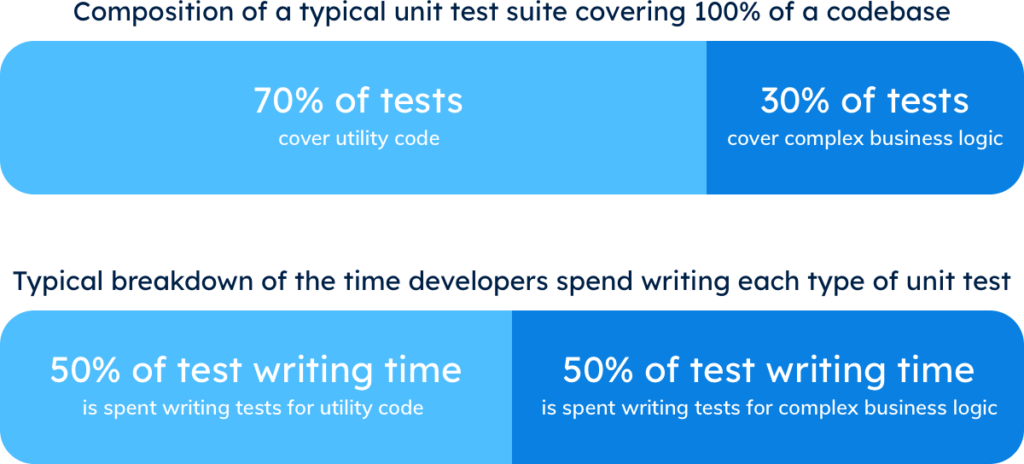

As a thought experiment to demonstrate the time saved by unit regression tests, suppose that 30% of the possible unit tests for an application cover complex business logic. When it comes to complex business logic, a human will often produce more accurate unit tests than AI-generated tests; these complex tests take up 50% of the time a developer spends writing unit tests.

Running Diffblue Cover will create unit regression tests that increase code coverage for utility code by an average of 35% additional coverage for the whole application. In this case, AI-generated tests for this utility code yield a 1000x increase in authoring speed. Because the tests are automatically maintained by Diffblue Cover, they require no additional time or work to keep them up-to-date.

As a result, using Diffblue Cover to create unit regression tests would save an organization on average up to 25% of their total time (and cost) of unit testing. For the organizations included in the above study, this would amount to an average savings of £160,000/year, without even accounting for the time saved by automatically maintaining the tests.

The time developers save by using unit regression tests could also be reinvested in writing more unit tests for the highly complex business logic that would benefit from a human author, further improving the quality and resilience of the company’s code.

Demonstrated Savings from Diffblue Cover

In practice, Diffblue Cover validates the calculations above. For one customer, Diffblue Cover created 3,200 tests for a back-end application overnight—1,000 times faster than writing the equivalent number manually.

This saved the company over one man-year, in addition to providing more confidence in application stability when adding new code, which also improved the speed at which engineering teams could deliver business value.

Diffblue Cover also increased coverage for another module within an important backend system by 36%, and picked up on edge cases in other applications that could have led to customer-impacting incidents.

How to get started with unit regression tests

Unit regression tests are created by Diffblue Cover and can currently be made for Java code. Diffblue Cover supplements the necessary work of creating tests for teams that are already strapped for time, allowing them to spend more of their time on the most valuable and creative aspects of their work.

Diffblue Cover automatically writes tests for new code (e.g. a development branch) and because a new baseline test suite is created each time Diffblue Cover is run, the tests are always up-to-date without manually maintaining them.

Unit tests don’t have to be expensive. Sign up for a free trial of Diffblue Cover to see how unit regression tests can efficiently improve your company’s code quality.