One common development practice that customers ask us about is Test-Driven Development (TDD). Surely TDD is mutually exclusive with generating tests automatically, isn’t it? No! They are mutually supportive techniques and in this blog, we’re going to explain why.

TDD in a Nutshell

There are many articles written about TDD and indeed there are several variations or ‘schools’ of TDD. Rather than delve into detail of any particular TDD, we consider TDD in general.

In TDD, the mindset is that the developer starts their work by writing tests that express the requirements. This will usually be an iterative process, starting with minimal requirements, writing tests, writing code, and then gradually expanding and refining the requirements, the tests and the code in small steps – refactoring code as needed, for instance as new failure modes are discovered, or new features require.

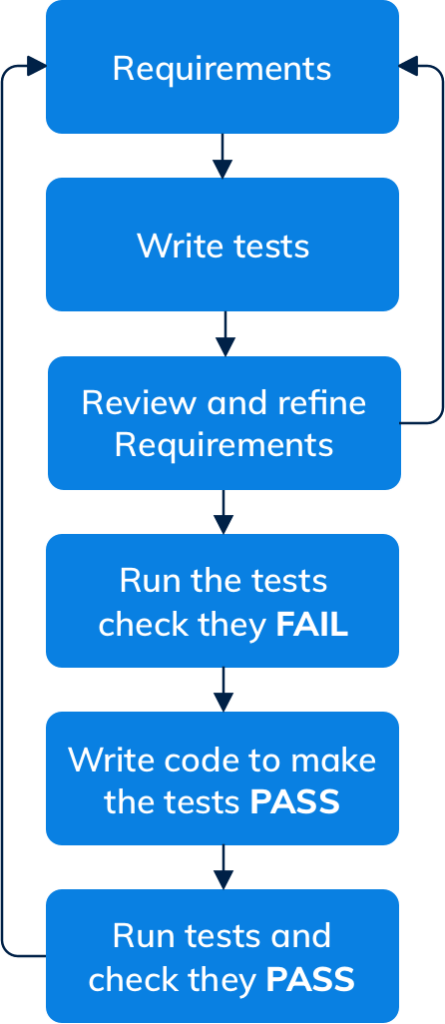

The tests then become the specification of how the software should work. The tests should cover both the user stories that form the core of the requirements and the exception conditions that could be encountered. Typically, a developer practicing TDD might have a workflow that looks something like this:

This workflow leads to a focus on really understanding and refining the requirements and writing tests, along with writing just enough code to make the tests pass. It can also lead to code that is inherently designed to be easily testable.

The Trouble with TDD

Although TDD is popular, it can be hard to practice: a survey of developers published earlier this year found that, although 41% of the respondents said their organizations have fully adopted TDD, only 8% said they write tests before code at least 80% of the time—the definition of TDD.

It can be hard to write tests for code that isn’t written yet, because how the code solves the requirements—the semantics of the implementation—impacts how it is tested. And so this leads to a situation where code and tests are interdependent and evolve in parallel. In addition, developers often have to work on systems that don’t have good unit tests to start with—and often, business pressures mean there’s no time to refactor everything using TDD.

So how can developers retain the benefits of focusing on the requirements, while also working more efficiently? Automatic test writing.

Automatic Test Writing

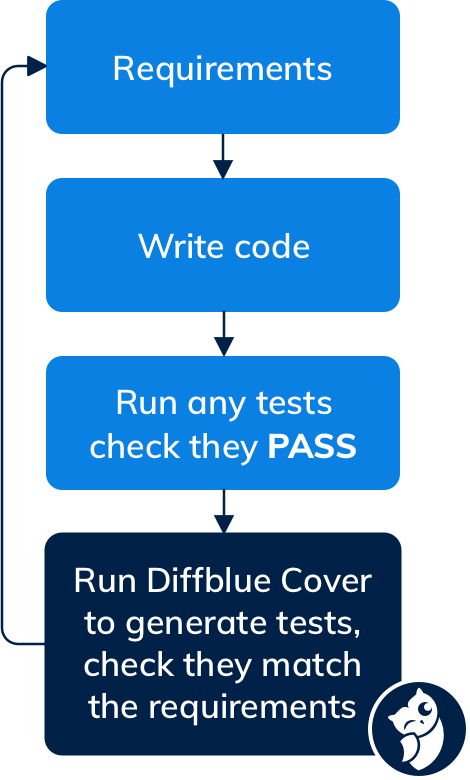

With Diffblue Cover, unit tests are written automatically from the existing code. Of course, the developer still needs to understand the requirements and think about appropriate designs, but now much of the more tedious parts of constructing unit tests is performed automatically. The developer can now look at the tests written by Diffblue Cover and see if they match the requirements—if they don’t, then the focus shifts to refining the code. The workflow then ends up looking something like this:

This is particularly valuable with systems that don’t have a good set of existing tests. Not only do you get a set of unit tests that find regressions, but the tests help the developer understand the code. This leads to a workflow where developers have a blended focus across understanding requirements, writing code and reviewing tests against those requirements and expectations.

The Chicken and the Egg

Comparing the two approaches, it looks like they encourage developers to follow two different mindsets, so how can they be mutually beneficial? In TDD, the tests that you write will tell you that your code does at least what it should do—assuming, of course, that the tests accurately reflect the requirements, and that all the possible failure modes and exceptional behavior have been accounted for.

TDD tests, depending on the particular variant of TDD, are often ‘black box’ tests; the code did not exist when they were written, so they know nothing about the implementation details.

Alternatively, a more ‘white box’ style may be used, in which code and tests evolve closely, using techniques such as mocking. But do those tests cover all of the behavior of the code? When the code was written, did the implementation choices create a new failure mode that was not considered when the tests were written? How might those failure modes interact with other existing features? Did the new code expose a previously unreachable and untested failure mode in older code? Did the mocking sufficiently reflect possible real behaviours?

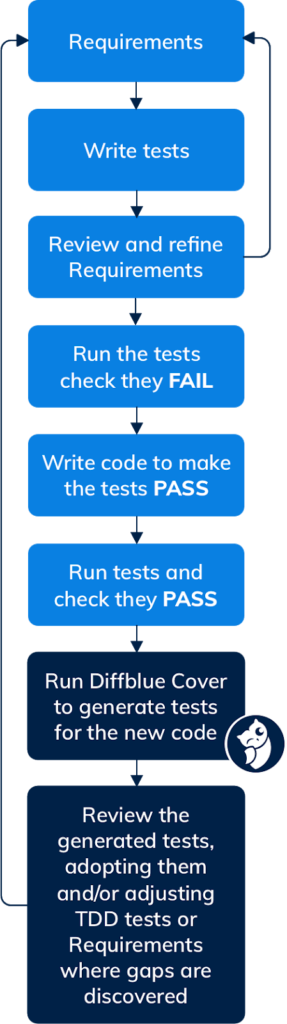

This is where Diffblue Cover complements TDD: by automatically generating tests for the actual code that was written, any additional failure modes introduced at that time get exposed as test cases and can be reviewed against your handwritten tests, or against the requirements. This allows appropriate early action to be taken, such as adopting the Diffblue tests into your test suite, using the Diffblue tests as a template for further extension by the developer, modifying your code to remove the erroneous behavior, or even refining your TDD tests or requirements if the Diffblue tests expose behavior that needs to be considered. This leads to a new workflow:

In this way, Diffblue Cover both provides the developer with a skeleton for writing the tests for previously untested conditions, and acts as an additional safety net for developers to catch unexpected behavior in code, gaps in your TDD tests, or ambiguities in your requirements—encouraging questions to be asked and assumptions to be challenged—leading to higher quality work and reduced risk.

Interested in getting tests for your code? Try it out for yourself!

Note: Since there are many schools of thought about the specific processes that make up TDD, this post has been edited to clarify that the diagrams represent simplified TDD workflows with the core element almost everyone can agree on: writing tests before code.