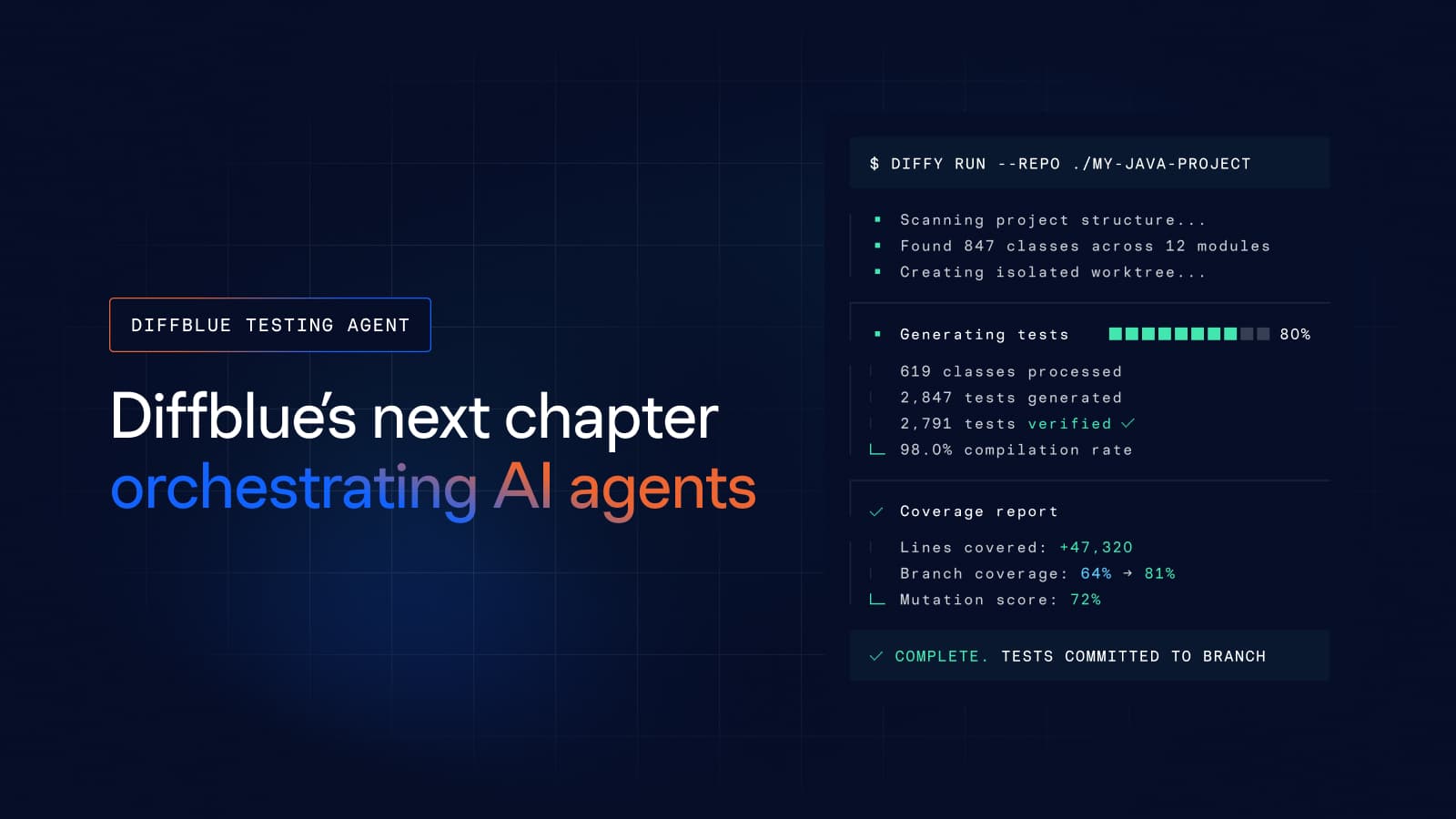

We’re making the new Diffblue Testing Agent available for enterprise evaluation, and with it, a benchmark that quantifies the problem it solves. In a head-to-head test across 8 enterprise Java repositories, a senior developer using Claude Code achieved 32% line coverage in time-boxed sessions. Diffblue Testing Agent, running autonomously, achieved 81%, with 2.5x higher mutation coverage and near-zero developer time. This moment marks a turning point: moving beyond isolated gains toward a model where AI can be trusted to execute complex engineering work consistently, predictably, and at scale

2026: Reliable productivity gains without sacrificing quality

A decade into Diffblue’s journey, the conversation around AI in software engineering is no longer about possibility — it’s about reliability at scale. While AI coding agents have rapidly become embedded in developer workflows, their promise of productivity often breaks down when applied to real-world, enterprise systems.

Our focus has always been the transition from manual software engineering toward autonomous software product engineering. We began this journey with Diffblue Cover, focusing on the automation of regression unit test generation. The product has been adopted by several of the largest, most demanding software development organizations in banking, insurance, manufacturing, life sciences, and technology to help them modernize applications efficiently, accelerate innovation, and reduce toil for their teams. We’ll continue to evolve this product and support our customers.

But, in 2026, the challenge has shifted. While AI coding agents like Claude Code, GitHub Copilot, and Gemini are everywhere, they have introduced new bottlenecks that prevent effective deployment at an enterprise scale.

The industry is currently grappling with a fundamental question: How do software development teams get reliable productivity gains from AI at scale without compromising on quality?

In 2025, it became clear that the chat-with-code model is hitting a ceiling. To move forward, we need to stop treating AI as a digital assistant and start treating it as a managed engineering workforce. This is why we developed Diffblue Agents: to bridge the gap between AI that writes code and AI that successfully automates complex, long-running, multi-step engineering processes. To be clear, this is not a new coding agent or LLM. Diffblue Agents are the orchestration and verification layer required to harness the power of AI coding agents to do actual work reliably and at predictable, enterprise quality. And our first product based on our new Diffblue Agents platform – the Diffblue Testing Agent – is the first of many to follow.

The AI Rollout Challenge

AI coding agents are revolutionizing software development at every level across the industry. Their capabilities have enabled a new breed of developer to churn out features at breakneck speed. However, proper software engineering is something different. Lowering quality bars to make teams cope with increased review and QA loads is not the solution. General AI coding agents are designed for flexibility and the ability to solve any problem in any way, whereas enterprises require predictability in the way tasks are performed and high quality in the resulting output. We have identified the following points of friction that prevent these tools from consistently delivering ROI:

- The Standardization Problem

Productivity dies with ad-hoc prompting. Without a unified approach, developers spend time reinventing the wheel, leading to as many different levels of quality as there are individuals in the team. It is essential that the AI uses the same guardrails and standardized processes across the organization to achieve consistent results. - The Cost of In-House Customization

The lack of standardization leads directly to a massive rollout problem. Currently, enterprises attempt to achieve consistency by building their own in-house workflows and custom extensions. This is usually tasked to platform teams who lack the specific expertise or bandwidth for such specialized development, forcing them away from supporting the enterprise’s core business. - The Vendor Lock-in and Volatility Risk

Betting on a single vendor for AI coding agents in a volatile market is risky. Attempting to achieve standardization by focusing on a single platform puts all an enterprise’s eggs into one basket. This creates high switching costs, especially when internal teams use a specific vendor’s custom mechanisms to implement workflow extensions, leaving the enterprise trapped by its own customization efforts. - The Predictability Problem

Executing an AI-driven workflow must produce results that do not require constant manual intervention. If an agent produces output that doesn’t quite work, a human may spend half an hour babysitting the agent by re-prompting the agent to fix its own mistakes. In a large development team, this rework tax eats into the expected productivity gains. Predictably high-quality outputs are key to actual efficiency. - The Scale Challenge

When performing tasks at a codebase level, such as refitting regression tests during modernization efforts, reliability is the only metric that matters. The system must be able to scope the work, verify the output, and handle its own failures without flagging a developer for help. Human intervention is physically impossible due to the sheer volume of changes.

Five Principles of Diffblue Agents

We architected Diffblue Agents around five core pillars. These design decisions are specifically intended to move organizations away from experimental AI usage and toward a managed engineering workforce that provides reliability for engineering and engineering for reliability.

#1: Platform Independence – Bring Your Own Agent

In a volatile market, choosing a single AI platform is a strategic gamble. To mitigate Vendor Lock-In, Diffblue Agents follow a “bring your own agent” model that works with a wide range of LLM-based coding agents. This architecture ensures uniform output quality regardless of the underlying model, eliminating the risk of vendor lock-in. Because we provide the orchestration, you can switch providers or use multiple models simultaneously without losing consistency or facing high switching costs.

#2: Reliable Output Across All Interfaces

Standardization must meet developers where they already work. Diffblue Agents ensure that the same reliable, managed workflows are available in all user interfaces provided by your agent, including the IDE, CLI, and cloud workspaces. This ubiquity ensures that whether a developer is writing a single class or refactoring a module, the output remains predictably high-quality. For at-scale workflows, we provide our own dedicated CLI to manage tasks that are beyond the scope of a standard developer interaction.

#3: CLI for Massive Engineering Jobs

At the codebase level, certain tasks are physically impossible for humans to audit due to their scale. To meet the Scale Challenge, our dedicated CLI orchestrates agents for these massive engineering jobs, which are particularly critical in application modernization. We currently provide workflows for the automated generation of regression tests, but also large-scale refactorings, and complex dependency upgrades are coming soon. The system manages the repercussions of these changes across thousands of files, ensuring the entire codebase remains functional without requiring a human to manually intervene in the process.

#4: Proven, AI-Enabled Product Engineering Workflows

At Diffblue, we operate with a fully AI-enabled, human-in-the-loop product engineering workflow that spans the entire lifecycle, from strategic planning and implementation to shipping and processing customer feedback. These workflows apply to almost any software development organization. They are designed to work across all the agents we use and are thoroughly tested to deliver consistent results every time they run. We are continuously improving these internal processes and will soon launch them as part of Diffblue Agents so that every organization can benefit from this level of automation and reduce their Cost of In-House Customization.

$5: Predictability Through Guardrails

General AI coding agents are optimized for flexibility. To solve the Predictability Problem, we trade that open-ended flexibility for predictability by enforcing strict engineering guardrails. While agents are instructed to produce code, Diffblue Agents safeguards that the resulting work is always tested and checked; noncompliant changes are automatically fixed and unintended changes are automatically cleaned up. This ensures quality is consistent and that every change is ready to be pushed to your repository with a high probability of passing CI without any manual rework.

Toward Autonomous Software Product Engineering

Our initial focus with the Diffblue Testing Agent is at-scale regression unit test generation via a batch-mode CLI. This provides a high-value entry point where the benefits of autonomous execution are immediately visible. However, as explained above, our vision extends to covering the entire software and product engineering lifecycle.

Rethinking the Engineering Process

Related to this is a deeper question we are still answering: are traditional software engineering processes even suitable for adaptation to AI coding agents? Is it possible to just automate existing Agile processes, or do they need to be replaced by something entirely new from the ground up?

While we explore these questions, we recognize that human engineers remain essential for high-level design and requirement definition. Our architecture keeps the human in the loop where judgment is needed, but it creates a clear path toward full automation of the more mechanical aspects of the lifecycle. By separating the generation from the verification and orchestration, we are building a foundation that allows enterprises to move AI from a chat experiment into a reliable, autonomous engineering workforce.

Learn more about Diffblue Testing Agent, try it for yourself, or request an evaluation today.