Introducing the Diffblue Testing Agent — the first in a new generation of purpose-built AI agents for enterprise software engineering.

The testing gap AI coding platforms can’t close

AI coding agents have changed software development. If your engineering organisation has invested in GitHub Copilot, Claude Code, or any of the rapidly maturing agentic coding platforms, you’ve made the popular decision. These tools are producing productivity gains for individual developer tasks, and adoption is accelerating across every industry.

But a fundamental gap remains. Most enterprise codebases carry years — sometimes decades — of production code with minimal test coverage. Every refactoring effort, every Java migration, every monolith decomposition hits the same wall: without tests, you can’t move safely. AI coding platforms haven’t solved this. They excel at individual tasks in an interactive session, but they can’t autonomously scope, generate, and verify unit tests across hundreds of thousands of lines of existing code.

Anthropic’s 2026 Agentic Coding Trends Report puts a number on this: developers now use AI in around 60% of their work, but fully delegate only 0–20% of tasks — primarily those that are, in their words, “easily verifiable.” Test generation should be the ideal candidate for full delegation. It’s high-volume, repetitive, and verifiable. So why aren’t teams delegating it?

Introducing Diffblue Testing Agent

Today, we’re launching Diffblue Testing Agent — the first in a series of purpose-built AI agents based on the Diffblue Agents platform, designed to bring enterprise reliability to the tasks that AI coding assistants still can’t do alone.

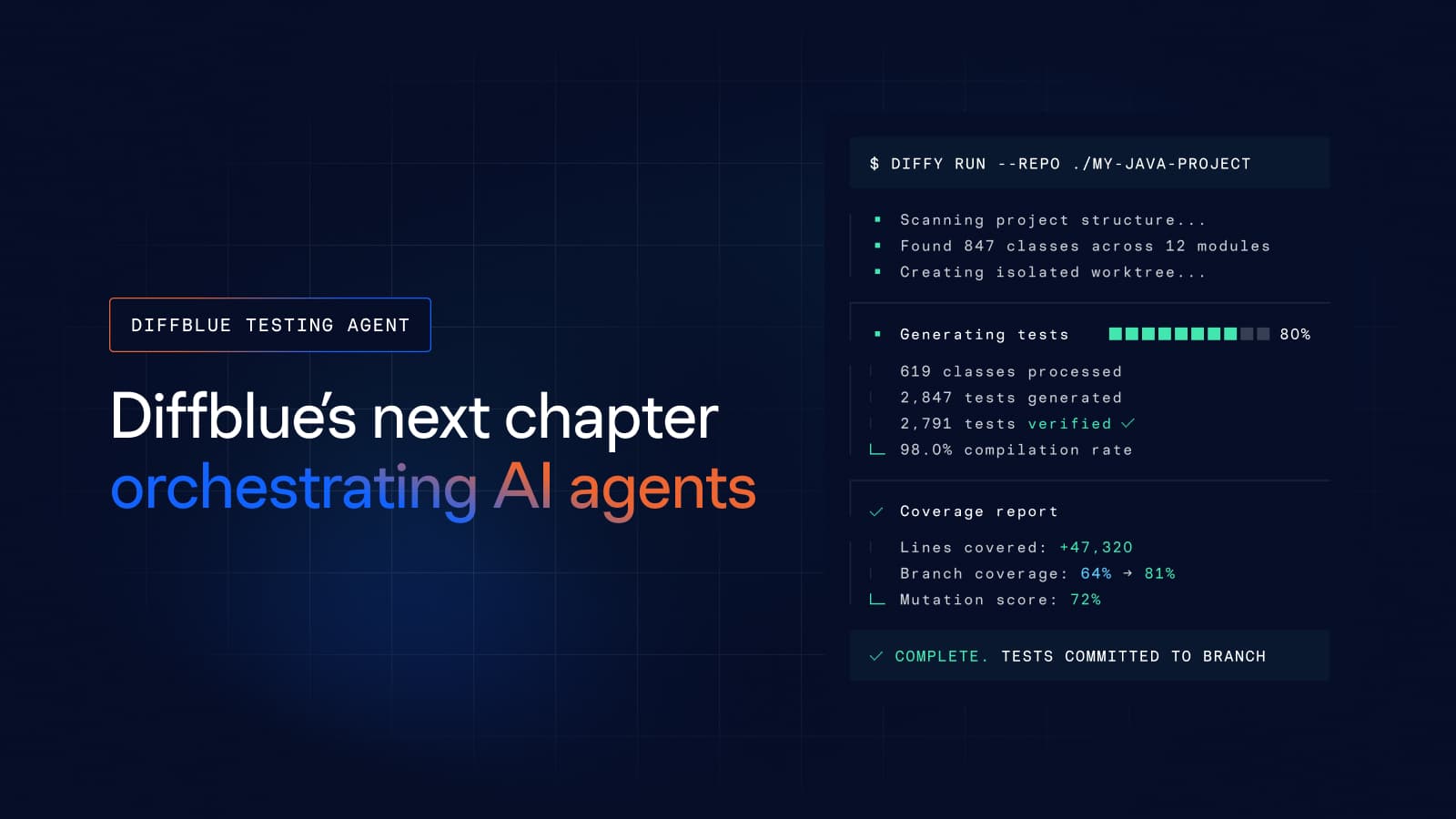

Diffblue Testing Agent works with your existing AI coding platform to generate, verify, and fix unit tests autonomously across entire codebases. It supports Claude Code and GitHub Copilot CLI at launch, with Java 8–25 and Python 3.

It is not a new AI coding agent. It is not another IDE plugin. It is the orchestration and verification layer that turns your existing AI investment into a managed testing workforce — one that operates at project scale, without developer intervention, and delivers output that is ready to push to your repository.

We built Diffblue Testing Agent on the principle of Bring Your Own Agent: your platform, our engineering.

How it works

The model is straightforward. You bring your codebase and your AI coding agent of choice. Diffblue Testing Agent orchestrates the work. You get tests that compile, pass, and improve coverage. Each of the five problems above has a specific architectural answer.

Autonomous at codebase scale

The Diffblue Agents CLI processes entire multi-module projects without human intervention. It scopes the work, generates tests, handles failures, and cleans up after itself. For application modernisation programmes — Java 8 to 21 migrations, monolith decompositions, framework upgrades — this is the difference between a safety net that takes years to build manually and one that’s ready in weeks.

Consistent quality through engineering guardrails, not individual prompting

Diffblue Testing Agent enforces strict engineering guardrails that ensure every developer — and every run — produces output at the same standard. We trade the open-ended flexibility of general AI coding for predictability: non-compliant changes are automatically fixed, unintended changes are automatically cleaned up, and every change is ready to pass CI without manual rework.

Purpose-built workflows, so your platform team doesn’t have to be

Diffblue Testing Agent ships with proven, tested workflows for autonomous test generation — built by test engineers, not assembled by a platform team learning on the job. This eliminates the hidden cost of in-house customisation and lets your infrastructure and platform teams stay focused on your core business.

Bring your own agent, keep your optionality

Diffblue Testing Agent integrates with Claude Code and GitHub Copilot CLI today. Because we provide the orchestration — not the underlying model — you can switch AI platforms or run multiple simultaneously without losing consistency. Our architecture ensures uniform output quality regardless of the underlying model, eliminating vendor lock-in and the high switching costs that come with building on a single vendor’s proprietary mechanisms.

The proof

We benchmarked two approaches across 8 enterprise-grade Java repositories totalling 31,069 coverable lines, all starting from zero test coverage. On one side: Diffblue Testing Agent, running autonomously. On the other: senior Java engineers (averaging 10 years of experience, daily AI tool users) working with Claude Code and the latest Opus model, given a 2-hour or 20-prompt budget per repository.

Each prompt in the Developer + Claude Code workflow represents a developer decision: what to target, how to instruct the agent, and how to recover when output isn’t usable. Across 8 repositories and 149 prompts, each yielded an average of 67 lines of coverage. Diffblue Testing Agent’s single-prompt setup yielded 3,384 lines — a 58x advantage.

The prompt count captures what headline metrics don’t: cognitive overhead. Every prompt is a context switch, and as our benchmark team observed, most of that attention goes not to productive test authoring but to supervising an agent that drifts off-plan, claims false completions, and abandons tasks mid-execution.The full benchmark methodology and results are available in our internal benchmark report.

What’s next

Diffblue Testing Agent is the first step. We’re building toward task-mode workflows that integrate directly into your IDE and cloud workspace, expanding language support, and deepening the test engineering capabilities that make this agent uniquely reliable.

But testing is just the beginning. The same architecture — Bring Your Own Agent, enterprise guardrails, autonomous execution at scale — applies to every high-volume, verifiable engineering task where AI coding platforms fall short today. Future Diffblue agents will extend this model to other parts of the software engineering lifecycle.

Our goal is to make AI coding platforms as reliable for engineering as they are fast for code generation.

Start your evaluation

Diffblue Testing Agent is available to try for enterprise teams using Claude Code or GitHub Copilot CLI, free for 14 days. We are also offering a guided evaluation with defined success criteria, dedicated technical support, and a clear conversion path.

→ Start your evaluation → View the benchmark → Talk to our team